AWS Outage Disrupts Global Operations, Exposes Infrastructure Reliance Risks

The digital landscape is a vast, interconnected web that often feels invisible until the moment it snaps. On the evening of October 19, 2025, that invisibility vanished as a sudden technical storm brewed within the servers of Amazon Web Services. What began as a quiet glitch in the late hours of the night rapidly evolved into a widespread crisis that dragged well into the following day. The epicenter of the disruption was the US-EAST-1 region located in Northern Virginia, a critical geographic hub that serves as the backbone for a staggering percentage of the global internet’s daily traffic.

The root of the chaos was remarkably small compared to the scale of its impact. According to technical post-mortems, the failure originated from a race condition within the automated Domain Name System management software for DynamoDB. This service functions as AWS’s primary NoSQL database, providing the storage foundation for countless applications. In this instance, two automated processes attempted to update the same DNS entries simultaneously. This collision created a digital stalemate, resulting in corrupted or entirely empty records that effectively erased the path to the database.

As the morning of October 20 dawned, the localized error began to ripple outward through the AWS ecosystem. Without functional DNS resolution, other foundational services found themselves wandering a dark hallway, unable to locate the data they needed to operate. The failure cascaded through Elastic Compute Cloud virtual servers, Lambda functions, and various load balancers. Container orchestration tools like ECS and EKS soon followed, transforming a specific database error into a comprehensive regional blackout that paralyzed some of the world’s most recognizable digital brands.

The human and economic impact was immediate and visceral. Across the globe, millions of users found themselves staring at loading wheels and error messages. Social media giants like Snapchat and Reddit went dark, while entertainment staples like Netflix, Roblox, and Fortnite saw their active user bases evaporate. Productivity slowed to a crawl as Slack connections dropped, leaving remote teams unable to communicate. Even the financial sector felt the sting, with major cryptocurrency and stock trading platforms like Coinbase and Robinhood struggling to maintain basic functionality.

Beyond social media and gaming, the outage seeped into the essential machinery of daily life. Food delivery apps failed to process orders, and mobile banking applications locked out users attempting to manage their finances. In more critical sectors, the consequences were even more sobering. Hospital communication systems faced delays, smart home devices became unresponsive, and major carriers like United Airlines dealt with booking and check-in failures. The digital threads that hold modern society together were briefly, but violently, unraveled.

Public awareness of the scale grew as monitoring sites lit up with reports. Downdetector recorded a staggering 17 million mentions of the outage, an unprecedented figure that solidified the event as one of the most significant cloud disruptions of the decade. While the core issues were addressed within the first half of the day, the total duration of the event spanned over 15 hours. Certain peripheral services, including AWS Config and Redshift, required even more time to reach full stability as engineers worked through the backlog of failed requests.

The response from Amazon’s engineering teams was a massive exercise in high-stakes troubleshooting. Once the DNS race condition was identified as the culprit, the company moved away from its automated systems to perform manual interventions. Engineers worked around the clock to clear conflicts, reroute traffic, and meticulously restore service levels. Their efforts were supported by a commitment to transparency that helped manage the rising tide of public frustration. The AWS Health Dashboard provided a continuous stream of updates, offering a window into the recovery process.

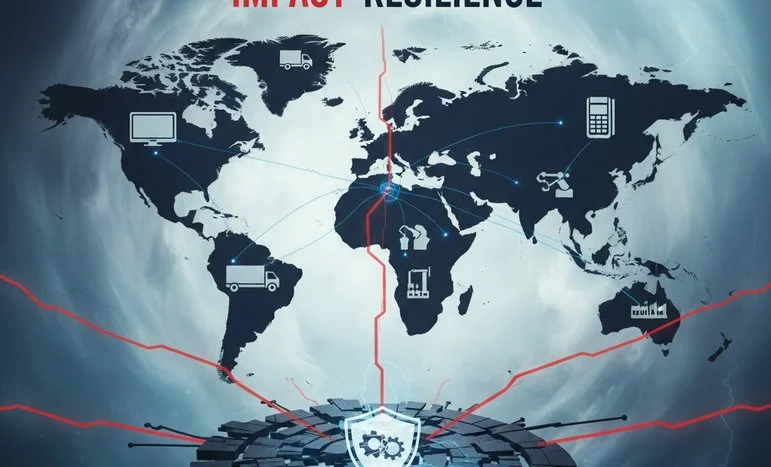

Despite the eventual restoration of service, the event served as a jarring reminder of the risks inherent in centralized cloud computing. For years, businesses have flocked to providers like AWS for their cost-efficiency and ease of use. Many of these organizations, however, had unknowingly built “castles in the sand” by concentrating their entire infrastructure in a single region like US-EAST-1. The outage proved that even sophisticated setups utilizing multiple availability zones were not immune when a foundational service like DynamoDB experienced a core failure.

The financial repercussions of the 15-hour window were significant. E-commerce retailers watched helplessly as potential customers abandoned their carts during checkout. Streaming platforms lost revenue and faced a wave of customer service complaints. In the world of software development, DevOps teams saw their deployment pipelines freeze, delaying critical product launches. For industries like logistics and healthcare, the blackout translated into delayed shipments and slower patient care, highlighting that cloud reliability is no longer just a technical metric, but a matter of public safety.

In the aftermath of the October storm, a fundamental shift in corporate strategy began to take hold. The conversation moved from “cloud-first” to “cloud-resilient.” Industry leaders began to question whether the convenience of a single hyperscaler outweighed the existential risk of a total blackout. This soul-searching has led to a surge in multi-cloud adoption. By spreading workloads across competing providers like Microsoft Azure and Google Cloud, companies are attempting to ensure that a failure in one ecosystem does not result in a total cessation of their business operations.

Parallel to the move toward multi-cloud is a renewed interest in hybrid models and edge computing. Some organizations are choosing to keep their most critical data on-premises or within private data centers, using the public cloud only for scalable, non-essential tasks. Meanwhile, the expansion of edge computing is pushing processing power closer to the end-user. By utilizing local gateways and content delivery networks, businesses can maintain basic functionality even if the central “brain” of a cloud provider becomes disconnected.

The rise of decentralized technologies has also gained new momentum following the AWS failure. Systems that distribute data across thousands of independent nodes offer a form of structural redundancy that traditional centralized clouds cannot match. While these technologies were once viewed as the fringe experiments of the Web3 movement, they are increasingly being evaluated by mainstream enterprises. These firms are looking for “antifragile” systems that do not have a single point of failure, ensuring that their data remains accessible regardless of any one company’s technical health.

The urgency of these architectural changes is amplified by the current explosion in artificial intelligence. Modern enterprises now rely on the cloud not just for hosting websites, but for training massive language models and running real-time analytics. An outage in 2025 doesn’t just stop a user from scrolling through a feed; it halts machine learning pipelines and risks the corruption of massive datasets. As AI becomes more deeply integrated into manufacturing and autonomous logistics, the requirement for 100 percent uptime becomes an absolute necessity rather than a lofty goal.

Looking at the broader market, the financial impact of the October outage was estimated to be in the billions when accounting for lost productivity and missed opportunities globally. Interestingly, however, the long-term market valuation of major cloud providers remained relatively stable. Investors appeared to treat the incident as a temporary hurdle in an otherwise dominant industry. Competitors were quick to capitalize on the moment, using the disruption to market their own uptime statistics and regional diversity, sparking a fresh “arms race” in cloud reliability.

For IT departments around the world, the event necessitated a total refresh of disaster recovery protocols. The industry has seen a move toward more aggressive Service Level Agreements and the implementation of regular “chaos testing.” In these drills, engineers intentionally break parts of their own systems to ensure that failover mechanisms work as intended. Additionally, the use of AI-powered monitoring tools has become standard, as these systems can often spot the subtle patterns of a race condition or a cascading failure before it reaches a critical mass.

The October 2025 outage was a paradoxical event—it was both a display of modern vulnerability and a testament to engineering prowess. The fact that such a complex, global system could be diagnosed and repaired within 15 hours is a feat of coordination that would have been impossible a decade ago. Yet, the sheer reach of the disruption served as a warning that our digital eggs are increasingly held in very few baskets. True resilience in the modern age requires a commitment to diversity in infrastructure, moving beyond simple cost-cutting to prioritize stability.

As we move further into a decade defined by hyper-connectivity and artificial intelligence, the lessons of the North Virginia blackout will continue to resonate. The winners in the new digital economy will not necessarily be those who move the fastest, but those who can withstand the inevitable storms of a complex system. Infrastructure strength has become the new bedrock of trust, and the ability to remain online during a crisis is now the ultimate competitive advantage. The darkness of October 2025 has passed, but it has left behind a roadmap for a more durable and decentralized future.

We appreciate that not everyone can afford to pay for Views right now. That’s why we choose to keep our journalism open for everyone. If this is you, please continue to read for free.

But if you can, can we count on your support at this perilous time? Here are three good reasons to make the choice to fund us today.

1. Our quality, investigative journalism is a scrutinising force.

2. We are independent and have no billionaire owner controlling what we do, so your money directly powers our reporting.

3. It doesn’t cost much, and takes less time than it took to read this message.

Choose to support open, independent journalism on a monthly basis. Thank you.